Introducing JSON Schema¶

JSON is a data interchange format that has rapidly taken over as the defacto web-based data communication standard

in recent years.

JSONSchema is a way of specifying what a JSON document should contain. The Schema are, themselves, written in

JSON!

Whilst schema can become extremely complicated in some scenarios, they are best designed to be quite succinct. See below

for the schema (and matching JSON) for an integer and a string variable.

JSON:

{

"id": 1,

"name": "Tom"

}

Schema:

{

"type": "object",

"title": "An id number and a name",

"properties": {

"id": {

"type": "integer",

"title": "An integer number",

"default": 0

},

"name": {

"type": "string",

"title": "A string name",

"default": ""

}

}

}

Useful resources¶

Link |

Resource |

|---|---|

Useful web tool for inferring schema from existing json |

|

A powerful online editor for json, allowing manipulation of large documents better than most text editors |

|

The JSON standard spec |

|

The (draft standard) JSONSchema spec |

|

A front end library for generating webforms directly from a schema |

Human readability¶

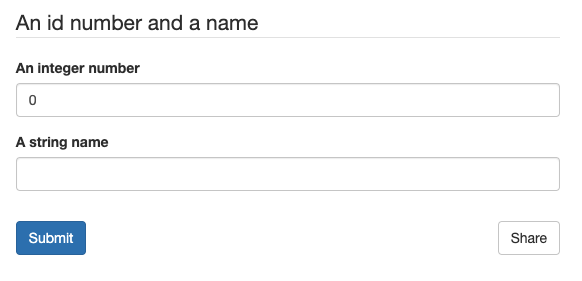

Back in our Requirements of the framework section, we noted it was important for humans to read and understand schema.

The actual documents themselves are pretty easy to read by technical users. But, for non technical users, readability can be

enhanced even further by the ability to turn JSONSchema into web forms automatically. For our example above, we can

autogenerate a web form straight from the schema:

Web form generated from the example schema above.¶

Thus, we can take a schema (or a part of a schema) and use it to generate a control form for a digital twin in a web interface without writing a separate form component - great for ease and maintainability.

Why not XML?¶

In a truly excellent three-part blog, writer Seva Savris takes us

through the ups and downs of JSON versus XML; well worth a read if wishing to understand the respective technologies

better.

In short, both JSON and XML are generalised data interchange specifications and can both can do what we want here.

We choose JSON because:

Textual representation is much more concise and easy to understand (very important where non-developers like engineers and scientists must be expected to interpret schema)

Attack vectors. Because entities in

XMLare not necessarily primitives (unlike inJSON), anXMLdocument parser in its default state may leave a system open to XXE injection attacks and DTD validation attacks, and therefore requires hardening.JSONdocuments are similarly afflicated (just like any kind of serialized data) but default parsers, operating on the premise of only deserializing to primitive types, are safe by default - it is only when nondefault parsering or deserialization techniques (such asJSONP) are used that the application becomes vulnerable. By utilising a defaultJSONparser we can therefore significantly shrink the attack surface of the system. See this blog post for further discussion.XMLis powerful… perhaps too powerful. The standard can be adapted greatly, resulting in high encapsulation and a high resilience to future unknowns. Both beneficial. However, this requires developers of twins to maintain interfaces of very high complexity, adaptable to a much wider variety of input. To enable developers to progress, we suggest handling changes and future unknowns through well-considered versioning, whilst keeping their API simple.XMLallows baked-in validation of data and attributes. Whilst advantageous in some situations, this is not a benefit here. We wish validation to be one-sided: validation of data accepted/generated by a digital twin should be occur within (at) the boundaries of that twin.Required validation capabilities, built into

XMLare achievable withJSONSchema(otherwise missing from the pureJSONstandard)JSONis a more compact expression than XML, significantly reducing memory and bandwidth requirements. Whilst not a major issue for most modern PCS, sensors on the edge may have limited memory, and both memory and bandwidth at scale are extremely expensive. Thus for extremely large networks of interconnected systems there could be significant speed and cost savings.